Latest Analysis

from the desk

A tactical breakdown of 2025's problems and a mini-auction strategy to build a championship squad.

Read Blueprint

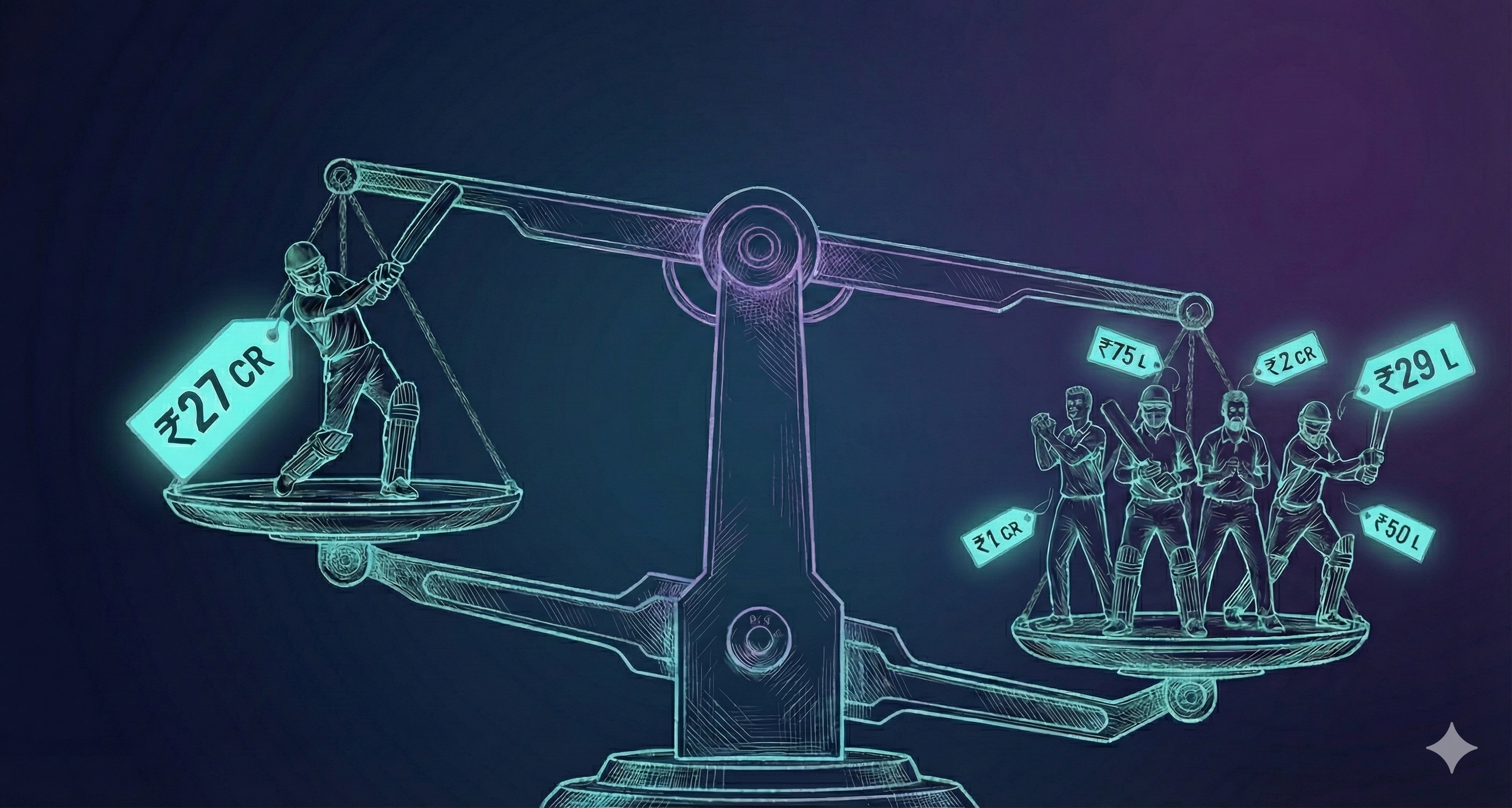

Using cricWAR and VOMAM to identify auction steals and overpays for IPL 2025.

Read Analysis

Comparing Marcel, IPL-only ML, and Global ML models against 2025 actuals.

Read Comparison4M ball outcomes, 63 features, Monte Carlo simulation — the ML system behind the analysis.

Explore the engineNo analysis matches that filter.